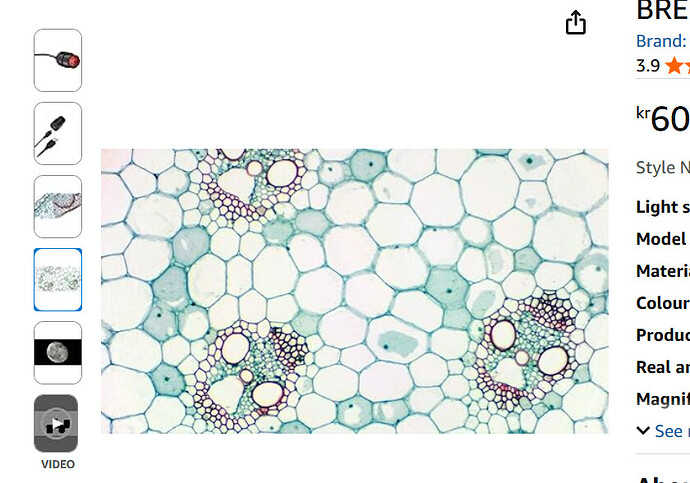

@micke thank you for sharing the videos. Those are not that bad actually, considering the hardware that you are using. One thing I should say (although you might already know), the pictures you see on Amazon are certainly not taken with the product advertised (whether it be the cameras or microscopes), so don’t expect that kind of quality. Also, most of the pictures they put are from sectioned samples. Like this one:

I am fairly sure this is a section that has been sliced down to 20-30um. If what you are imaging is thicker than 50um roughly, you’ll get a lot of blur from out-of-focus regions and it will reduce overall contrast.

This below is downright fake, it is possible to get such a 3D impression of an image with some microsocpes (such as DIC), but certainly not with the one advertised.

When you say you want to improve the quality of images and movies. Can you explain what you dislike in the videos you attached? Is it the graininess in the images, or you find it blurry?

Going to 4K is not a guarantee of better quality because the pixels tend to become smaller as well. As we’ve discussed, the pixels you have are already quite small for microscopy. For the same exposure time, smaller pixels will collect less light. There’s a whole discussion to be having about noise but if I try to put it simply:

- You have the read noise of your camera, this is a fixed amount of noise per image, the smaller your signal (or amount of light if you will) the more difficult it is to distinguish it from the read noise

- You have the shot noise of the light, this is a type of noise that is due to the discrete nature of light (photons), and scales with the square root of your amount of light. The relationship is not linear but having less light means your signal gets closer to the shot noise

One solution would be to increase the exposure time so that your smaller pixels collect the same amount of light. Yes, but:

- You have the thermal noise, this is a type of noise that scales (generally linearly) with the exposure time, it is heat that contributes to the false detection of a signal, and therefore increasing the exposure time leads to an increase in thermal noise

All cameras are different, and their noise levels vary quite a bit. But my main message is that having more pixels is not always a sign of quality. For reference, one of the standard camera we use in our microscopy facility are basically 2K with pixels that have a size of 6.5um.

Take care,

Omni